The limits of AI

"For every few likes, I'll make this image more [blank]." I recently saw several of these memes circulating on X/twitter, where people prompt a generative art model (mostly Dall-E) to make an image cuter or scarier, a worker busier or lazier. While this fun activity made a really cute photo of my dog, I realized underneath this meme is an activity that tests the limits of AI.

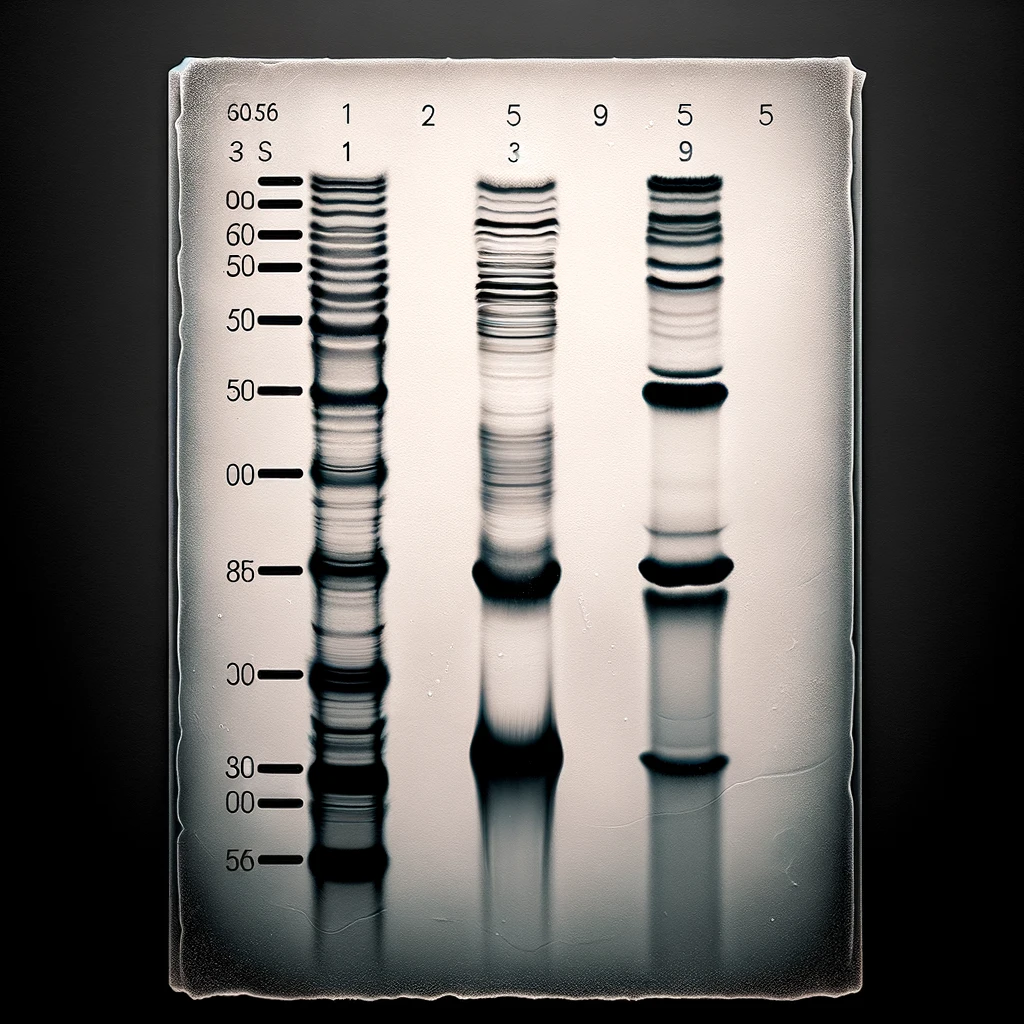

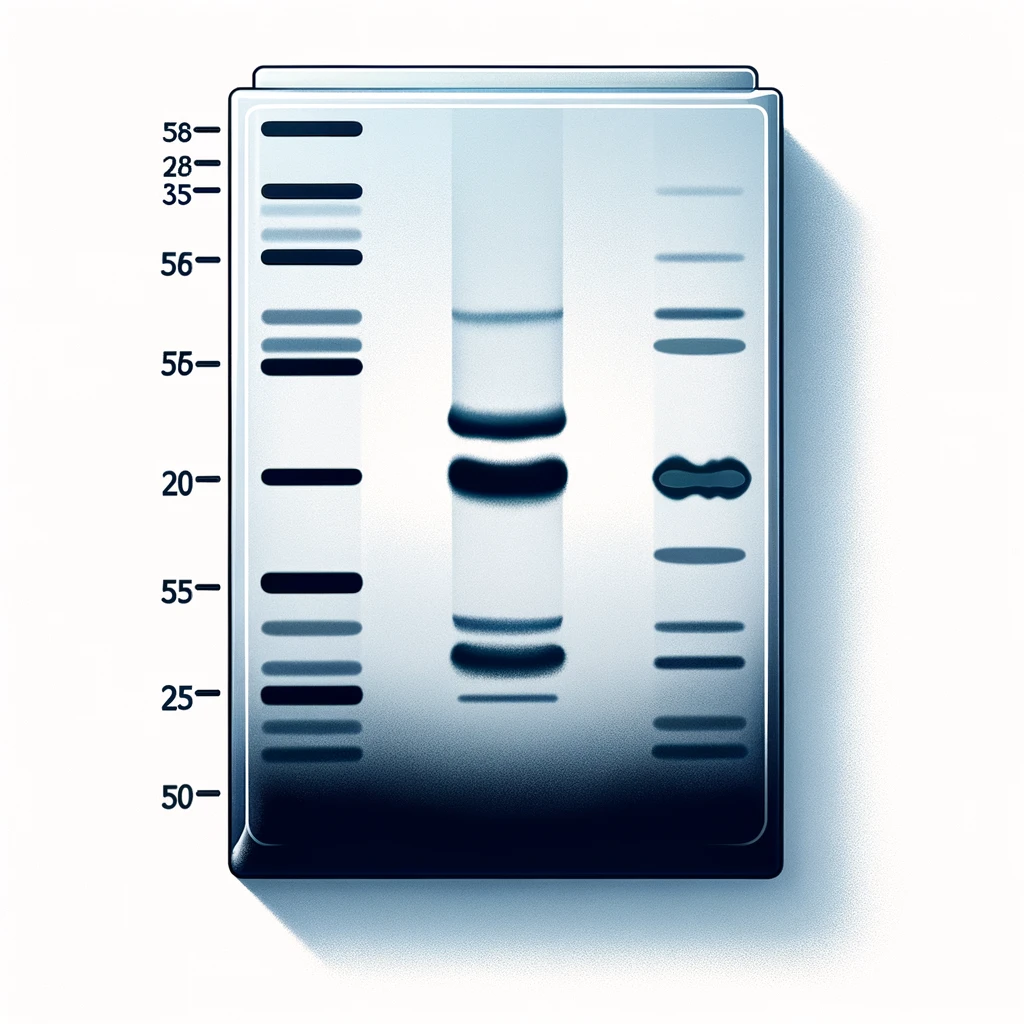

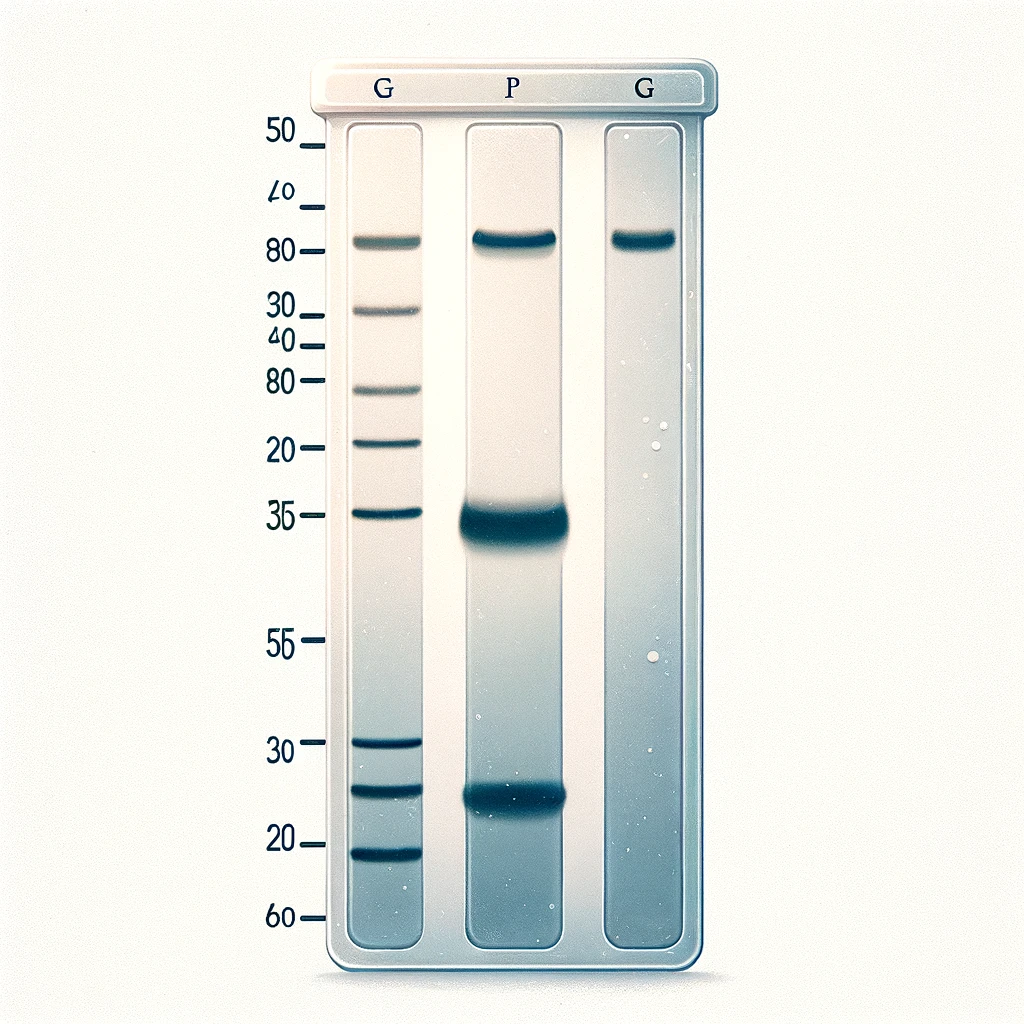

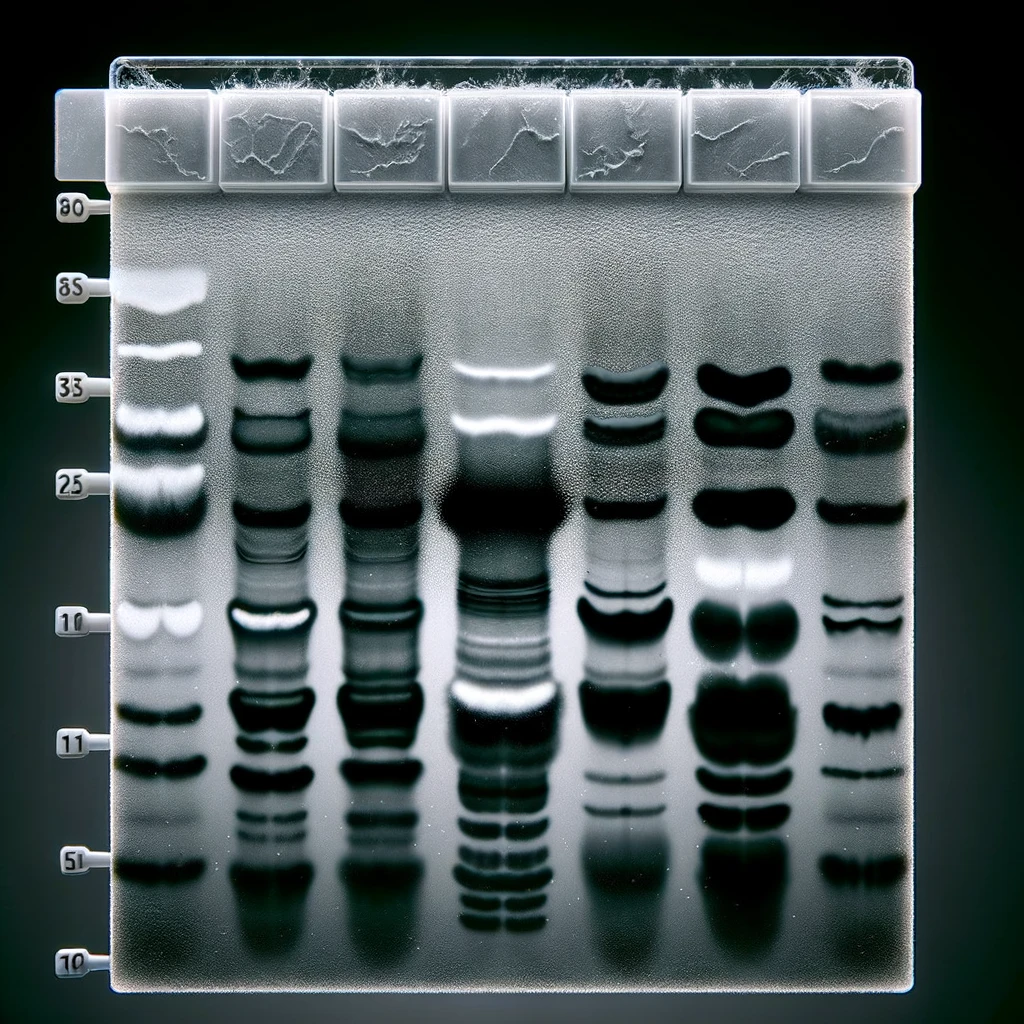

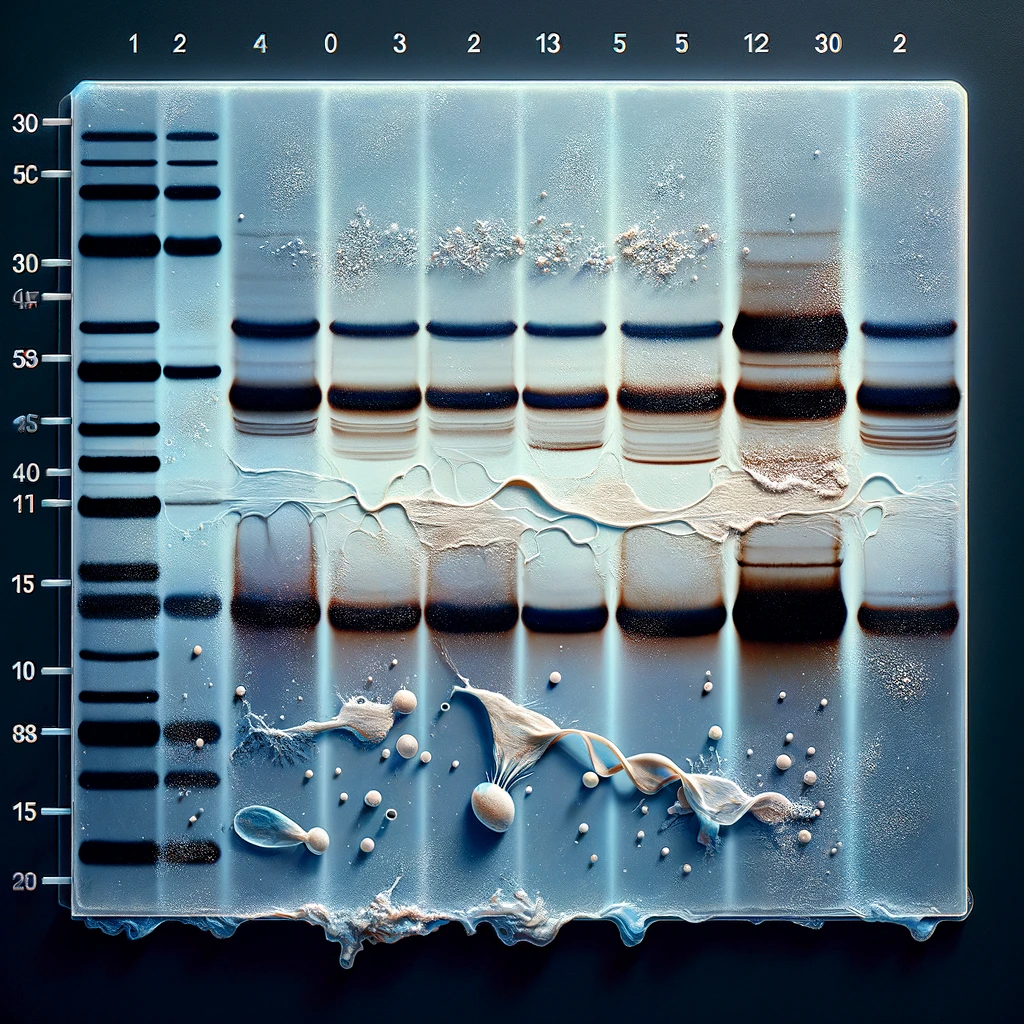

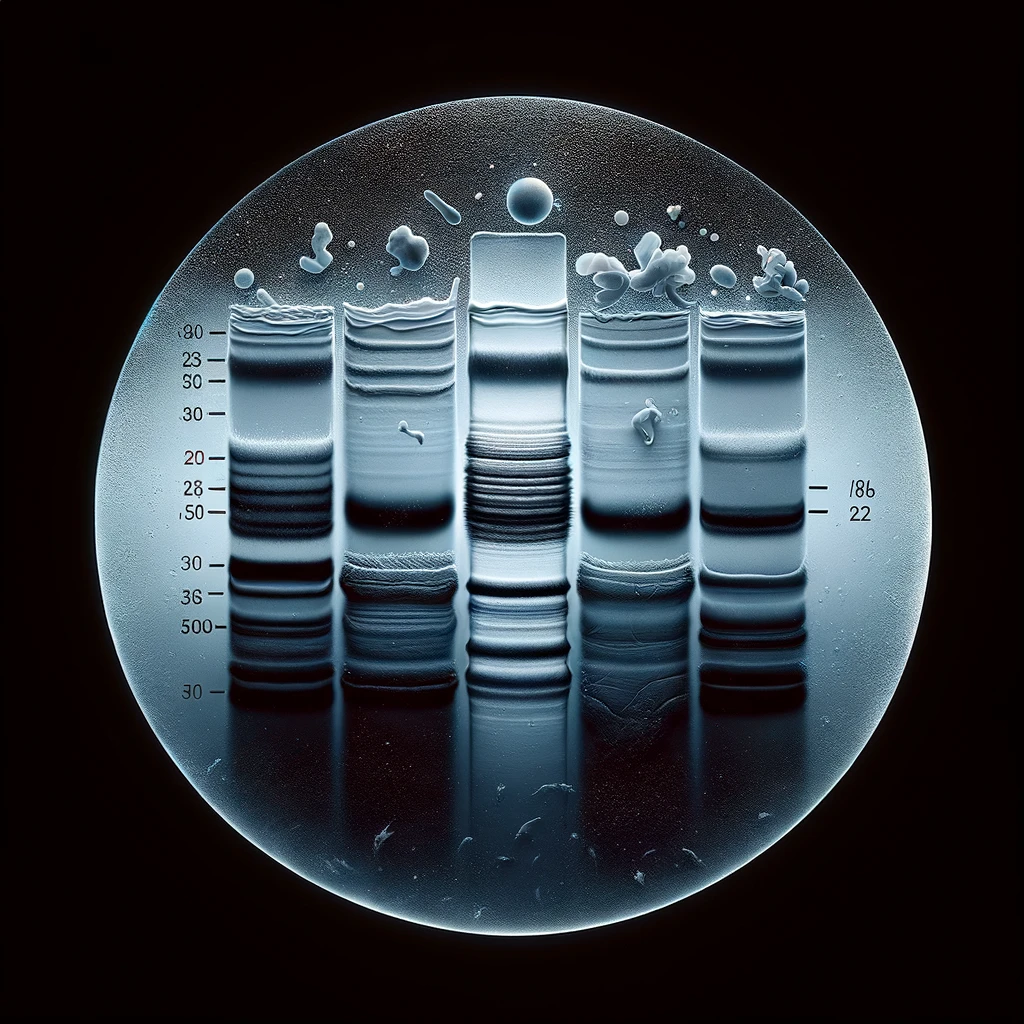

So this week, I returned to this idea and asked ChatGPT to make a western blot. Then, I asked it to make it more realistic. The gallery shows the first nine images, but here is a low-key viral x/twitter thread if you want to relive the entire, comical journey. Here's what I think we can learn from these scientific images 👇🏼

- Models are censored. It's clear (and obvious) that some topics are off-limits for these models. Ask something about politics, something dangerous or hateful, something x-rated, something illegal, or anything that doesn't align with safety systems, and you'll get a response back indicating that the model cannot/will not respond. To my surprise, making any image of a Western Blot was censored. The models seemed to know that recreating a scientific image could be used for fraud. The model didn't indicate this, but when I asked for an image, it provided a cartoon. When I said I wanted a realistic image, it responded casually, "This should be good enough". The only way I could get it to make an image was by using a known exploit of these models. I was surprised that such a niche guardrail was in place. However, "paper mills" are pay-for-paper services that churn out fake papers with fake/fraudulent data, and developers of AI models certainly don't want to contribute to this disgusting ecosystem. One of the only ways to combat these paper mills today is by using image detection software for re-used or heavily edited images. What happens when AI models are trained to create images de novo (without guardrails) that evade detection? While I think censoring is a good and important feature, it is by no means sufficient in the long term to prevent bad actors.

- Models are quickly getting better. If a model cannot do something today, wait. The pace of improvement in AI models is nothing short of astonishing. What seems like a limitation today might be a standard feature tomorrow. A combination of the rate at which these models learn, along with a community of programmers developing new capabilities, makes progress in this space difficult to keep up with. In the context of generative art models, for instance, the transition from generating basic shapes to creating intricate, realistic images happened in less than a year. Just last week, models were released that can accurately place text on images, which was impossible a few months ago. Similarly, language models have rapidly progressed from simple text-based tasks to complex problem-solving capabilities. With continuous updates and learning, these AI systems are quickly overcoming limitations, indicating the phrase 'AI can't do that' is only temporary

- Models are limited. Real-world use cases are few, and indispensable cases have not yet been realized. While AI models like ChatGPT and Dall-E are remarkable, their practical applications in the real world are limited. The excitement surrounding these models overshadows the reality of their current utility. Yes, they can generate impressive images or provide helpful text, but when it comes to tangible, real-world problems, their uses are still emerging. Their most obvious adoption and integration is for programmers who write code, a process that is significantly accelerated by AI models. But beyond this specific domain, these models serve more as toys for exploration and creativity rather than as solutions to problems an average person has today. The indispensability of AI in fields such as healthcare, environmental science, or energy is yet to be realized. The potential is there, but it's akin to having a high-powered engine without a car to put it in – and most people already get around town just fine.

Any scientist reading this article who has developed a Western blot will quickly see that 'AI cannot make a realistic blot'. But is it because the technology is limited (see #2) or because it has been censored (see #1)? And even if it could, this doesn't help solve any real-world problems that scientists have (see #3). Finding the limits of any tool will allow the user to know what the tool can be used for. In science, when new technologies are invented (e.g. CRISPR, single-cell anything...everything), scientists must then understand the tool to know what questions it might help answer. I see AI models at a similar stage. We have amazing new tools and are testing their limits to understand them. With this understanding, we can then know what problems they might help us solve.