Scientific judgment under uncertainty

A young graduate student in my lab once shredded a paper she read, finding major flaws in the logic and results, ultimately concluding it was garbage. Her skepticism of the work was on-point: the published study had problems. Later, that same graduate student presented her own work. While preliminary, it too had some problems. To my surprise, she hadn't seen them. How is it that this student can be skeptical about what she reads but not skeptical about her own work? How is it that we are quick to be skeptical of science but less skeptical of news? Why this difference?

Compartmentalizing our skepticism based on how well we know something is the default. We are skeptical about things we know well, compared to things we don't understand. An expert quickly sees the flaws; a novice does not.

We are less skeptical and quick to judge things that we know little about. So how do we maintain skepticism in the face of uncertainty? Dan Kahneman's Nobel-winning work entitled "Judgement under uncertainty: heuristics and biases" describes human nature’s tension between quick thinking and slow thinking, anthropomorphized in his tome Thinking Fast and Slow. Quick thinking tendencies allow split-second decisions but are also the root cause of our biases. Slow thinking is the rational mind that explores, then evaluates. Kahneman lays out the most common types of bias and argues that understanding our biases and having a language to describe them is the first step toward overcoming these quick-thinking fallacies.

For the young graduate student, she might be succumbing to confirmation bias (finding patterns that support previous beliefs), anchoring bias (putting too much weight on the first idea), or availability bias (making decisions with incomplete information). Likewise, we might put too much faith in the news because of the framing effect (being presented information from a specific viewpoint), bandwagon effect (believing information because others do), selection bias (being presented with only specific information), or any other of the tens of human fallacies that have been discreetly documented.

So then how do we remain skeptical? First, withhold judgment. Entertaining two opposing ideas in your mind at the same time is the mark of a first-rate genius, quipped F. Scott Fitzgerald. The information could be true, but also could not be true. Or some elements are true, and others false. It's complicated. Second, have 'strong opinions, weakly held'. Being confident in your viewpoint until presented with opposing information is probably a better mark of a first-rate genius.

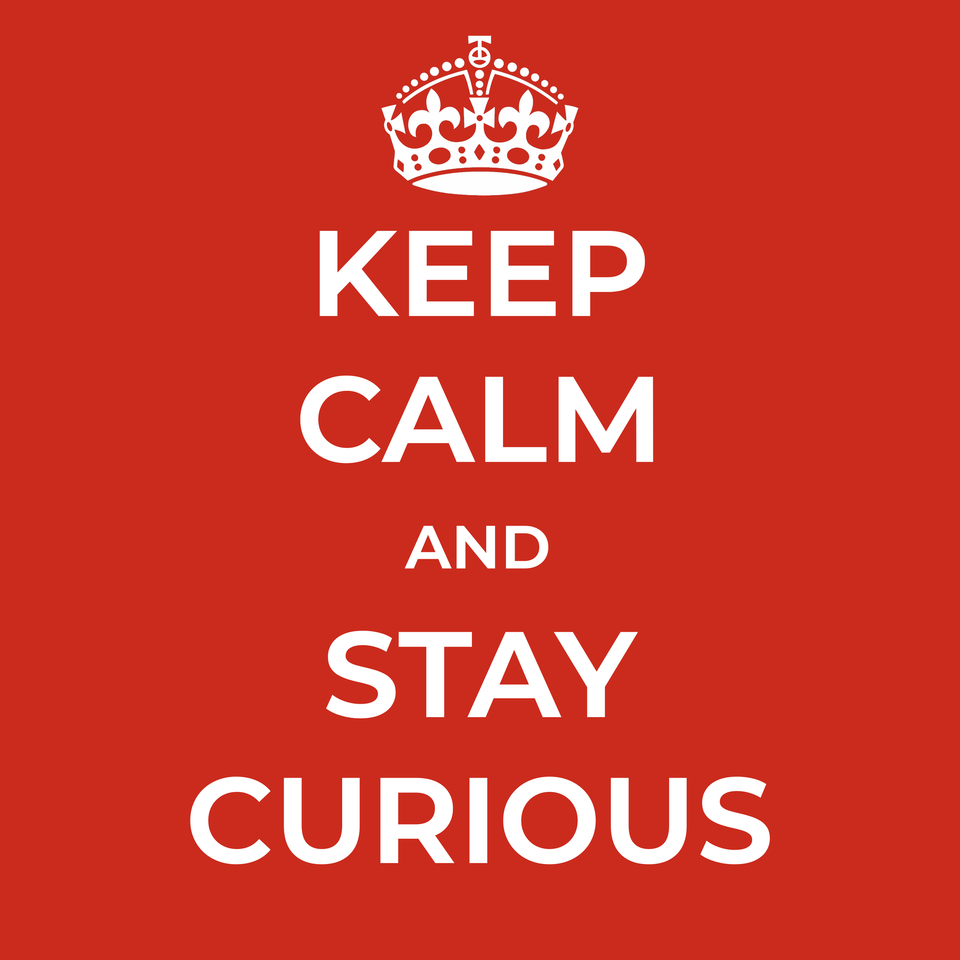

Pushing the limits of knowledge in science necessarily means that no one is an expert. You will be wrong. So when presented with new information, remain skeptical both of the information and of your opinion on it (but not too skeptical). In science, and in life, we all need to keep calm, stay curious, and carry on*.

*Keep Calm and Carry On was a motivational poster produced by the British government in 1939 in preparation for World War II. The poster was intended to raise the morale of the British public, threatened with widely predicted mass air attacks on major cities. While not the first derivative of the slogan, the version I made above seemed more fitting to raise the morale of those threatened to judge under uncertainty. I liked it so much, I made a poster. If you want one, grab it here 👇