A funding problem

In 1999, the National Institutes of Health (NIH) set a modular budget of $250,000/year. This meant that a researcher could ask for a set amount of money without having to justify each dollar; if they wanted more, then they needed to justify it. In the past 20+ years, the modular budget has not moved; in 2022 dollars, the NIH would need to set the modular budget at $431,000 just to adjust for inflation. Even so, this does not account for changes in technology (science is more expensive today) or changes in applicant competitiveness (biotech companies routinely hire entry-level scientists at wages higher than professors). Ask any academic scientist about the hardest part of the job, and they will undoubtedly say funding.

This one has been long time in making!

— Katsu Funai (@KatsuFunai) April 22, 2022

2014 R15: 42

2014 ADA BS: ND

2015 R15: 32

2017 ADA IBS: ND

2018 ADA IBS: 22%

2019 ADA IBS: recommended for funding then canceled with COVID-19

2020 R01: ND

2020 DoD: ND

2021 R01: 45%

2021 DoD: 2.5, not funded

2022 R01: Scored below payline

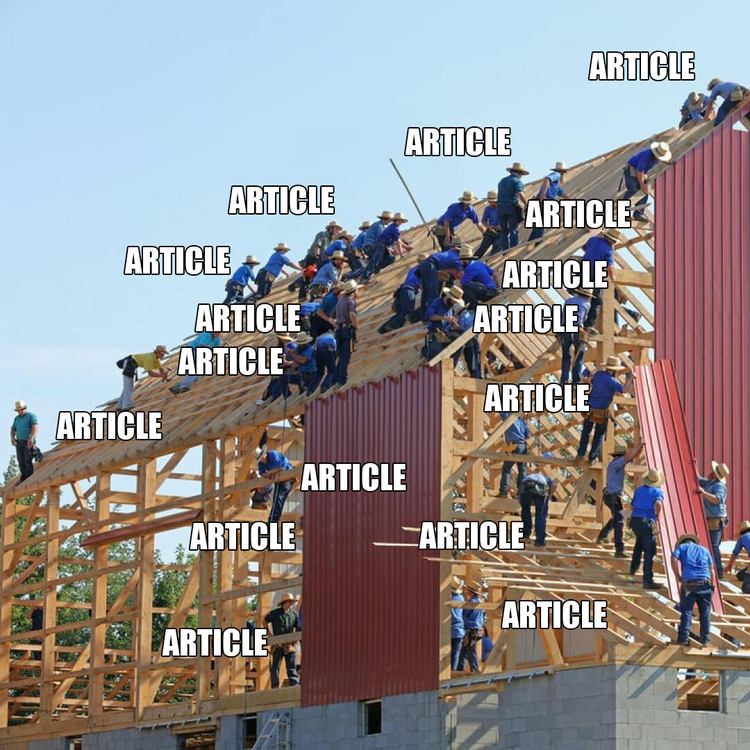

But small grant budgets are not the only funding problem; too few dollars are invested in biomedical research. The funding pay lines are often below 10%, meaning that less than 1 in 10 grants are funded. I spent the first 3 years of starting my lab writing grants, not "doing science". In my first year as an assistant professor at Duke, I wrote 23 grant applications. In my second year, I wrote 26 credit applications. Why? Probably an unhealthy combination of fear of failure, and nightmare-ish pay lines.

To explain my behavior, let me first explain how the current system works. The first step is to write either a generic application that says I want to do this interesting science or a specific application that says I’m going to do this interesting science which is also exactly the kind of science that you want to fund. The specific grants can come from foundations or from government agencies in response to requests for applications (RFAs; e.g. the National Cancer Institute's current RFAs). Write the grant and submit it by the deadline. The next step is to wait, very very patiently. The grant is first administratively reviewed to make sure your margins, font sizes, and text density call are within the proper requirements. Not joking here. If they are not, then they are then your grant is administratively rejected. They also make sure that it is the type of grant they are interested in funding. For example, you cannot write a grant to study dinosaurs and submit it to the NIH, because the link between dino-DNA and human health has not yet been established. If the funders think that your grant does not fall within their area of interest, it is administratively rejected, as was mine👇.

If you write @NIH grants, the three letters you never want to see

— Matthew Hirschey💡 (@matthewhirschey) March 3, 2022

N.F.P. pic.twitter.com/2DCG0MxtAf

After this first level of review, the grant is assigned to grant reviewers. There are several different flavors of this, but essentially a group of scientists is asked to review the proposal and score criteria that are deemed most important. For the NIH, they score significance, innovation, approach, investigator, and environment. After individual reviewers score the grant, the next step is to collectively review the scores and discuss whether or not the scores are concordant and if the reviewers are in agreement. In the setting of disagreement, there is an open discussion, and ultimately a vote on the final score. Then, the grant agency ranks the applications from most impactful and most promising, down to the least. A line is then drawn, called the pay line, which then determines which grants are funded. The pay line is set primarily based on the budget. High budget = higher pay line. Lower budget = lower pay line. Some grants need to score within the top 5% in order to be considered for funding, however, most pay lines at the NIH are around 10%. That means 90 to 95% of the grants are not funded each round.

Each study section will have approximately 50 to 100 grants assigned to it, which is too many to discuss. To reduce reviewer burden, the worst initially scored grants are not even discussed. Most study sections triage at least 50% of grants as not discussed in order to spend time on the grants that will most likely be funded. After the grants are scored, the scores are released. Then about three months later, the funding agency will determine which grants they’re going to fund. Ultimately, the funding agency has the final say on whether to fund or not fund any grant. For example, if a grant is scored fundable, that is outside the priorities of the funding agency, they will choose not to fund it. Conversely, if a grant is scored above the pay line, but the funding agency thinks that it is an important line investigation, they will choose to fund it.

From submission date to notice-of-award date, the time is at least six months for small agencies and up to a year for government agencies. And that doesn’t even take into consideration the amount of time it takes to prepare the grant. Imagine writing a paper in school and not getting feedback on that project for 12 months. Imagine submitting a proposal to bid on a project, and not knowing if you will do the work for a year. Laying out this absurd process highlights several problems, and opportunity to envision solutions.

- Too little money. One of the primary problems with this whole system is that there is not enough money to fund science. Good grants are not getting funded. Bad grants are not getting feedback. Why did I write so many grants are my first few years? Candidly it is a numbers game. You cannot know which grants are going to get funded. So my approach was to try to get rapid feedback on the proposals I was writing, both internal feedback as well as external feedback, in order to both learn how to write a grant, but also increase my chances of getting a grant. The problem is not "hard work" to get funding. There’s nothing intrinsically wrong with hard work. The problem lies in the fact that during the first two years of starting my lab, I was not doing any science. Instead, I turned into a professional writer. I woke up; I wrote all day, and I went home; I woke up the next day, and the cycle continued. I was fortunate enough to hire senior, talented research scientists to keep the experiments going while I was writing. So in this way, I was making tangible research progress, it’s just that it wasn’t with my hands. Instead, I was writing, rewriting, submitting, and resubmitting in order to try to get scored below that magical pay line.

- Too little feedback. The second major problem is the amount of time for feedback. Learning only happens from rapid feedback. Imagine turning in a project, and not getting feedback for one year. Knowing if your research project has a fatal flaw is something I would want to know quickly. Furthermore, logistically starting and stopping experiments takes time. Stopping an experiment is not simply like turning off a switch that you can simply turn back on when you come back into the room. Starting and stopping projects is slow, technical, and intentional. It is impractical to wait six months for feedback on an active line of investigation.

- Too much randomness. The major consequence of the current funding system is that there is too much randomness in the system. Randomness can be a good thing, like exposure to new ideas, but not when tied to learning. Learning requires a stable environment. Consider the difference between poker and chess: chess has a right answer, no chance, no randomness; it is all objective, with no subjectivity; whereas poker is the opposite, where you have to consider with the other player is thinking, doing, thinking about what you’re thinking. Writing grants is treated like chess, but it's more like poker. The determination between a funded grant and unfunded grant is entirely chance.

If we were designing a new grant funding system today, that leverages the tools and technologies of today, what would it look like? Channeling the decentralization movement captured by Web3, we might also decentralize grant funding. Too little money is earmarked because congress approves budgets for major funding agencies like the NIH and National Science Foundation (NSF) and has deemed other programs a higher priority. What if more people could donate to more foundations or to labs directly, which could fund positions or lines of investigation? Currently, easy mechanisms for a donor to give money directly to a lab don't exist, especially for what might be considered "small donations". Forty people giving $100/month is enough to support a graduate student each year. 40 subscribers to a graduate-students-as-a-service (GSaaS) — the power of monthly recurring revenue (MRR) applied to scientific funding. Small labs or larger foundations could leverage smart contracts to reduce administrative overhead. Patients or families affected by disease could directly support research without requiring non-profit organizations. Getting more people involved directly in funding scientific research could be good for the whole scientific enterprise.

But even with more money in the system, grants need to be reviewed. I declined an invitation to be a standing member of a grant review group (serendipitously) right before the pandemic struck. Why? Because I could not agree to review 10+ grants for each of three yearly cycles. Instead, I agreed to serve as an ad hoc member, reviewing grants as needed for different grant review panels. In this way, I can provide an important community service, but doing so when I can devote the required time. This sentiment of too many grants is shared by several colleagues. What if more scientists participated in the grant review process, thereby alleviating the grant review burden? What if grant reviewers were compensated and therefore incentivized for their time? What if grant reviewers could opt-in to review grants, and provide written feedback within a short window of time, which was then shared with applicants immediately?

In poker, "resulting" is when players use the outcome of a hand as a heuristic for whether it was a good play. But unfortunately, the outcome of any process skews the perception of whether or not decisions and processes were good ones — a fatal, decision-making mistake. Instead, the success and outcome of a decision are unrelated to the process to make that decision. But, human nature is to take credit for the good and discount the bad to bad luck. This is a type of self-serving bias to preserve long-term identity. Wrote a good grant that was funded? Probably because it was a beautiful grant. Wrote a bad grant that missed the pay line? Probably bad luck.

The best decision-makers apply a process to their decisions, especially with unknown or unknowable information. We cannot know who is going to review grants, what they will eat for breakfast, and if they will be in a good mood. More practically, we cannot know what other grants ours will be judged against (just like if your poker partner has a strong hand or weak hand). But we can learn to apply a process to writing grants. And perhaps we should engineer randomness into grant funding. What if grants that achieved a certain threshold were then entered into a pool for possible funding? Weed out the bad grants with fatal flaws, but then if the good grants cannot all be funded, then engineer randomness into the system by holding a lottery for funding. The current threshold between a funded grant and an unfunded grant is indistinguishable and completely random; formalizing this would save a lot of scientist heartache. More importantly, it would also allow the chance to let projects flourish that might not otherwise. Who knew that esoteric academic research on engineering stability of mRNA would be key to developing an mRNA-based COVID vaccine that pulls the world out of a pandemic?

Will these possible futures play out? We cannot know. But, we can see what Lux VC Josh Wolfe describes as the 'directional arrow of progress'. The major trends in Web3 technology are toward decentralization. How this will affect different fields will play out uniquely for each. The thought exercises laid out above are one possible future, one that I'm trying to build. But in the meantime, I'm back to writing grants the usual way. Perhaps I'll propose a modest $431,000 "modular" budget.